Static Code Analysis for Incident Root Cause and Evidence Recovery

Background: Sometimes during incident response, specialists need to understand the root cause of the incident as quickly as possible. This understanding helps us mitigate the issue and restore services faster. At the same time, this is a major challenge for any team: we must respond to the incident while simultaneously analyzing artifacts and evidence.

And this is where AI technologies come in—they can be leveraged alongside MCP capabilities to provide significant help. Now, let’s take a example: suppose we have a task to quickly understand how attackers were able to obtain the connection keys (or access credentials) to our organization’s crown jewels, and from there exfiltrate data or carry out other harmful actions as part of a sophisticated attack.

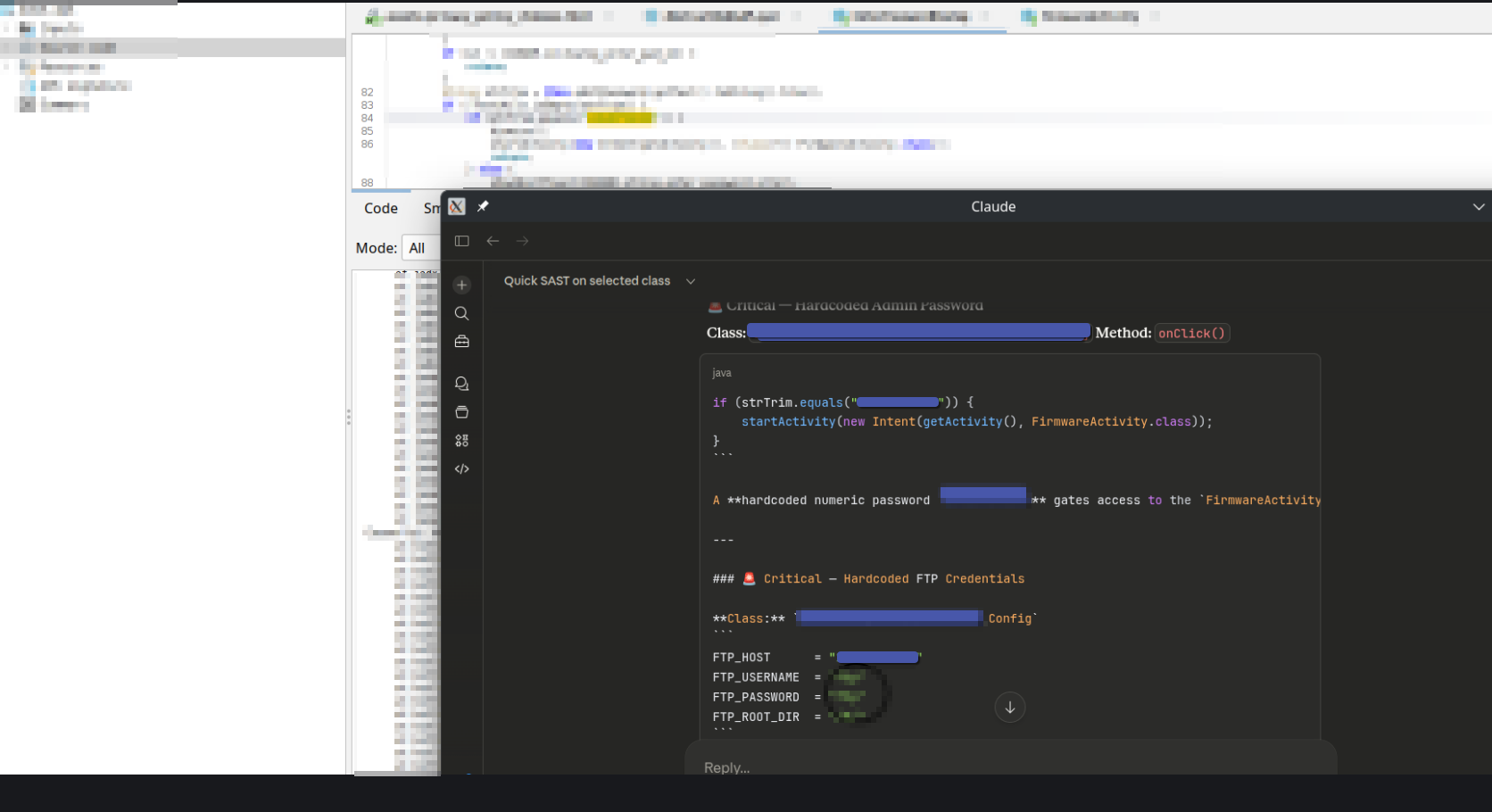

Application Breach Root of Cause : In this scenario, our DEMO company is leveraging certain technologies to provide capabilities for its customers to use it services. They also have an Android APK available in the Google Play Store. Now, we need to explore hypotheses—one possible hypothesis is a scenario where developers hardened some critical keys inside their application. As a result of this hardening, the threat actor analyzed the app, connected to the crown jewels, and carried out their malicious actions.

Required action items that needs to be by our side as IR specialist

- Use APK Decompiler

- User MCP server for that decompiler based on which over AI we can handle those tasks

Example: In this demo apk example, the key step is to first decompile the APK file. Then, we can use an AI model (via our MCP capabilities) to analyze the decompiled code and search for all sensitive data—such as hardcoded API keys, connection strings, credentials, or other secrets—embedded within it.

The scope of MCP capabilities in Incident Response is not limited to the examples we've discussed. It can be applied to any operation related to responding to cybersecurity incidents. Where legal team provided it permission to do such things .

The method described should only be applied in scenarios where the information is classified as TLP:CLEAR. Alternatively, the model / AI processing should be strictly limited to environments and data within the corporate scope (i.e fully controlled internal systems with no external data sharing).

Conclusion: In the era of AI, we should leverage tools that make our work more efficient. At the same time, there must be a clear boundary between private information and publicly available information—one that is strictly controlled by organizational policies, data classification rules (such as TLP), and compliance requirements.